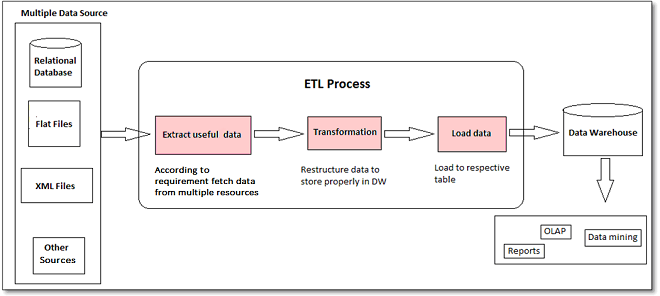

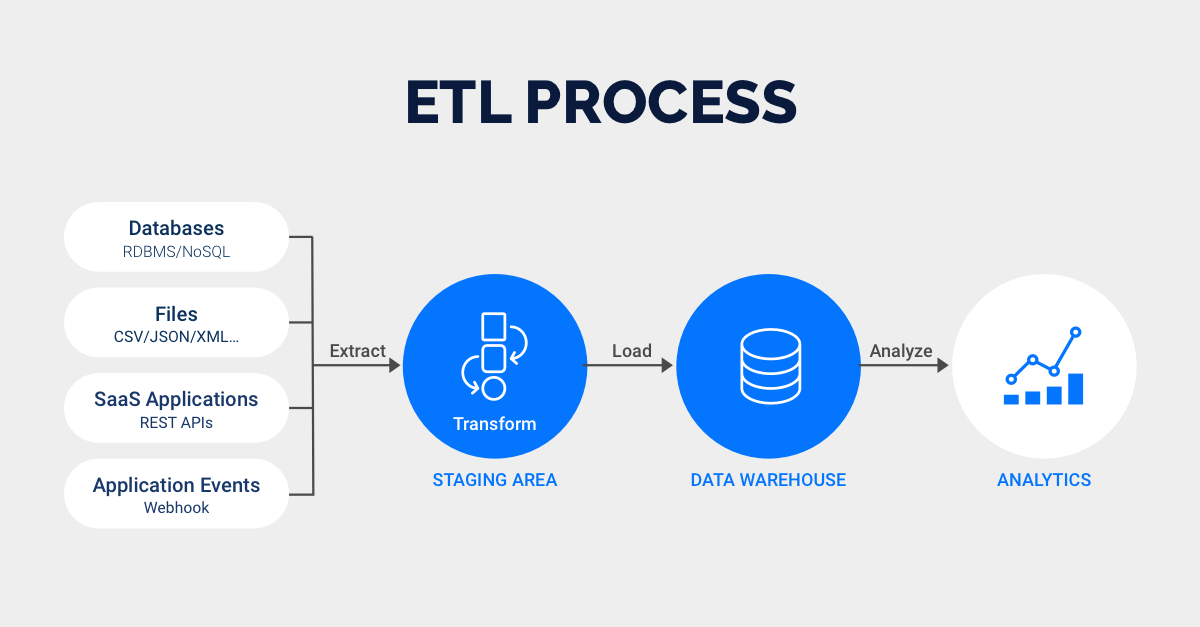

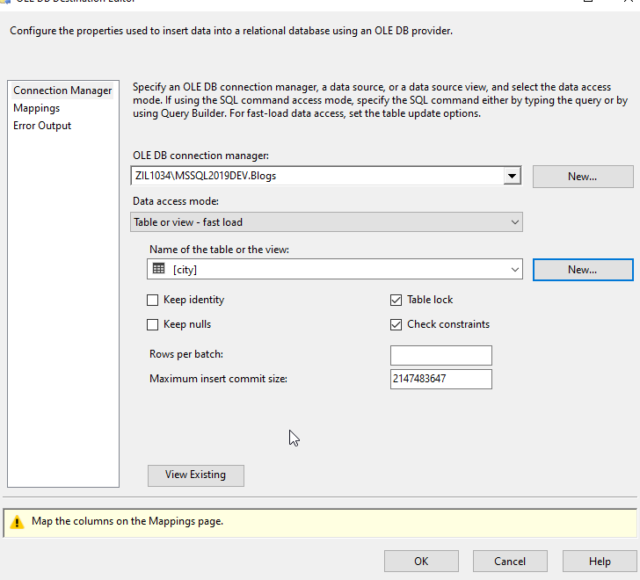

The first step of the ETL process is extraction. It is a process in which an ETL tool extracts the data from various data source systems, transforms it in the staging area, and then finally, loads it into the Data Warehouse system. ETL stands for extract, transform, and load, and it is a process of moving data from one or more sources to a target database, often for data warehousing or analytics purposes. It also means that the column can be the most space consuming one in a table. As the databases grew in popularity in the 1970s, ETL was introduced as a process for integrating and loading data for. This means the values cannot be compressed in any way. ETL, which stands for extract, transform and load, is a data integration process that combines data from multiple data sources into a single, consistent data store that is loaded into a data warehouse or other target system. Unfortunately for Power BI, almost all values in the column are unique. It should be used as the column for the incremental refresh. However, each approach has its own benefits, shortfalls, and ideal applications, so it’s important for every organization to examine their specific goals when deciding which one to use. ETL is a process in Data Warehousing and it stands for Extract, Transform and Load. Let’s say we have a column Full DateTime.

Nowadays, many graphical user interfaces (GUI)-based solutions are available to facilitate the ETL processes. First, it improves the performance and efficiency of your ETL process, as you only load the data that has changed since the last load.

ETL is the most resource-, cost- and time-demanding process in DW implementation and maintenance. Incremental loading is important for several reasons. If the source has a mixed or variable pattern of changes or requires different levels of freshness and quality for different types of data, it's best to use a combination of incremental and full load.Streaming ETL is increasingly becoming more popular than batch ETL, with more than 60% of companies using it. ETL (extract transform load) is the widely used standard process for creating and maintaining a data warehouse (DW). You can address specific business intelligence needs through. ETL uses a set of business rules to clean and organize raw data and prepare it for storage, data analytics, and machine learning (ML).

It can also minimize the impact on the source and destination systems, and. Extract, transform, and load (ETL) is the process of combining data from multiple sources into a large, central repository called a data warehouse. Full load is best for small and volatile sources that have a high frequency and volume of changes or no reliable way to identify changes. Incremental loading can reduce the network traffic, storage space, and processing time required for ETL operations. The ETL (Extract, Transform, Load) Process. While the destination can be any storage system, organizations frequently use ETL for their data warehousing projects. Questions to ask include: how often does the source data change and how much data changes? How often do you need to refresh the data warehouse and how much time do you have for the load process? How do you handle updates, deletes, and conflicts in the source and target data? How do you ensure accuracy and consistency of the data warehouse? And how do you handle errors, failures, and recovery in the load process? Generally speaking, incremental load is ideal for large and stable sources that have a low frequency and volume of changes that can be identified reliably. One of the most common ways to improve ETL performance is to use parallel processing, which means running multiple tasks or processes simultaneously. ETL is the process of extracting data from multiple sources, transforming it to make it consistent, and finally loading it into the target system for various data-driven initiatives. When deciding between incremental and full load, there are several factors to consider, such as the data source characteristics, the data warehouse design, the data quality requirements, and the performance trade-offs. Incremental data extraction is the process of copying only changed or new data from source to target system based on a preset time interval.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed